Share this @internewscast.com

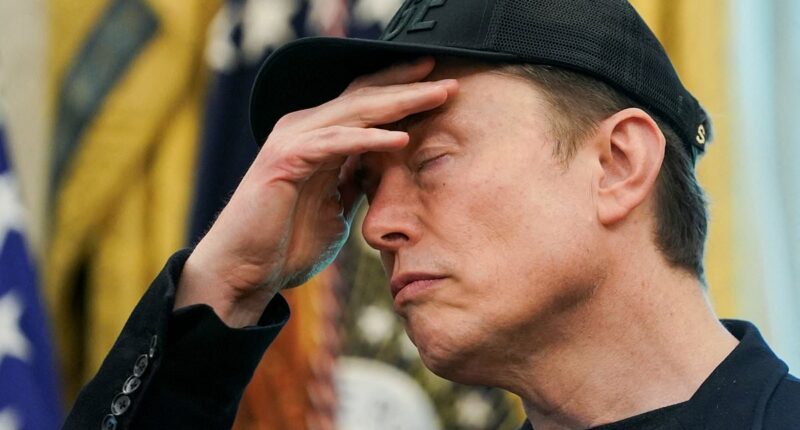

Elon Musk has taken steps to address the issue of X users who are capitalizing on AI-generated videos about the Middle East conflict.

The platform announced that users sharing these AI-created war videos without proper labeling will face a 90-day suspension from X’s monetization program.

Further breaches of this policy could lead to a permanent ban from the program, according to an announcement by Nikita Bier, the company’s product head, on Tuesday.

Bier highlighted the ease with which AI technology can now produce misleading content, emphasizing the importance of accuracy.

“In wartime, it’s crucial that people receive genuine information from those directly affected,” he explained.

This policy update follows recent US and Israeli military actions against Iran, which have intensified conflict in the region and resulted in a surge of misleading AI content online.

One of the heinous fake videos included shots of supposed Israeli soldiers weeping in fear, purportedly at an Iranian strike. That clip has more than 1.4million views.

Another fabricated clip viewed by more than 2.1million people showed Dubai’s Burj Khalifa completely engulfed in flames after supposedly being attacked by Iran.

A separate video posted on X claimed to show ‘Iranian missiles hit[ting] central Israel,’ with footage appearing to depict a massive blast on a building.

In reality, the clip was AI-generated — and it was marked as such by users on X.

The company said Tuesday that AI-made content would be marked either through crowdsourced notes from users or by metadata and other signals indicating generative AI tools.

Another video shared on X falsely claimed that Iranian ballistic missiles had obliterated ‘everything in their path’ in Tel Aviv.

The AI-generated footage showed what appeared to be a barrage of rockets raining down on the Mediterranean city.

Explosions and clouds of smoke could be seen in the distance, as the user apparently filming the footage zoomed in.

In another post, an attack on an unnamed Israeli airport was described and apparently captured on video.

However, the seemingly terrifying scenes were actually entirely fabricated by AI.

Join the debate

Should social media platforms police AI-generated war content or trust users to spot fakes themselves?

Some ways to spot whether a video has been generated by AI include low picture quality and very short durations, according to the BBC.

Some AI bots are also using out-of-date information, which can pop into videos and depict locations inaccurately.

Strange textures or an almost airbrushed look can also be indicators of AI-generated content, per the Better Business Bureau.

Physical inconsistencies, unnatural shadows and lights are also tells.

Other giveaways include physical inconsistencies, unnatural shadows or lighting.

Strangely enough, typos can actually be an encouraging sign – because humans are likelier to make them than machines.

Musk has predicted that AI-made video is the future of content, even as his own platform seeks to combat misinformation propagated by the technology.

‘Most of what people consume in five or six years – maybe sooner than that – will be just AI-generated content,’ Musk said in October.

Users who post AI-made videos of war and do not label them will be suspended from X’s monetization program – initially for 90 days and then permanently

Under the new guidelines, X users will need to add the ‘Made with AI’ label by pressing the menu on the post and selecting Add Content Disclosures.

The movement was praised by the Trump administration.

‘This is a great complement to X’s community notes system, which results in less ‘reach’ (thus monetization) for content annotated as inaccurate,’ Sarah Rogers, the under secretary of state for public diplomacy, said.

Rogers added: ‘You don’t need a Ministry of Truth to incentivize truth online.’

The shift comes as the company continues to tighten its AI guardrails.

Last month, X announced that it would make tweaks to its AI tool Grok in order to prevent overly sexualized photos from being created.

Grok had previously come under fire for posting about antisemitic tropes and claims of white genocide.