Share this @internewscast.com

Beginning next week, Instagram will introduce a system to alert parents when their teenagers search for terms linked to self-harm or suicide. Meta has announced that a similar alert feature for its AI chatbots is anticipated to be released later this year.

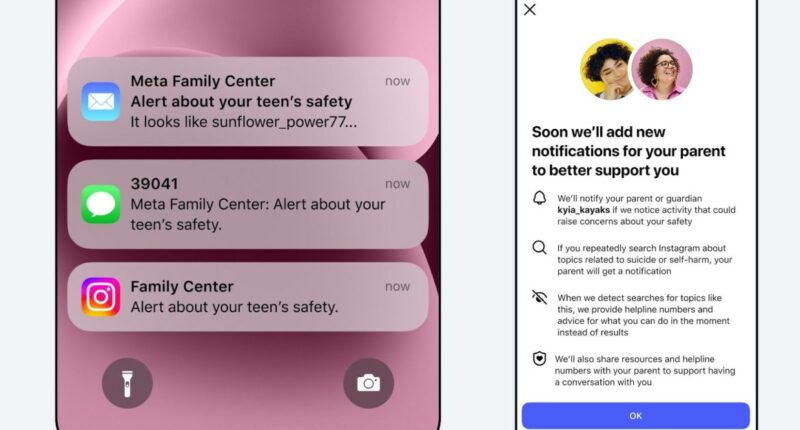

This innovative Instagram tool will notify parents if their child persistently searches for keywords associated with self-harm or suicide within a short timeframe. Initially, this feature will be available in the United States, United Kingdom, Australia, and Canada, targeting parents and teens who have opted into supervision. Plans are in place to extend this feature to other countries by year’s end.

Instagram clarified that most teenagers do not seek out suicide or self-harm content on the platform. When such searches are attempted, Instagram blocks them and instead directs users to supportive resources and helplines. “Our intention is to enable parents to intervene when a teen’s searches indicate they may need help,” the company stated. Instagram also aims to avoid excessive notifications to ensure they remain effective and meaningful.

Notification alerts will reach parents via email, text, or WhatsApp, depending on the contact details available. These alerts will be complemented by in-app notifications offering optional guidance on how to discuss these sensitive subjects with their children.