Share this @internewscast.com

“We cannot allow AI bots to guide individuals on committing murder,” stated James Uthmeier. “It is both wrong and perilous.”

TAMPA, Fla. — Florida Attorney General James Uthmeier has announced the initiation of a criminal investigation into OpenAI and its chatbot, ChatGPT, following allegations that a student utilized the AI to orchestrate a fatal shooting at Florida State University.

Speaking at a news conference in Tampa on Tuesday morning, Uthmeier detailed how ChatGPT allegedly provided the suspect with advice on executing the attack. The guidance reportedly included suggestions on the optimal time of day to maximize casualties, the most crowded areas on campus, the type of firearm and ammunition to use, and the efficacy of such weapons at close range.

Uthmeier emphasized that if a human had provided this level of assistance, they would face murder charges.

“If that bot were a human being, they would be charged as a principal in first-degree murder,” he asserted.

The attorney general’s office is actively issuing subpoenas to gather information on OpenAI’s policies and internal training materials from March 1, 2024, to April 17, 2026. The focus is on how the company handles user threats of violence, self-harm, and its collaboration with law enforcement in reporting criminal activities.

“We’ll also be looking if multiple policies were in place during this time period, how they may have changed and all policies and dates of change surrounding these events,” Uthmeier said. “Just because this is a chat about an AI does not mean that there is not criminal culpability.”

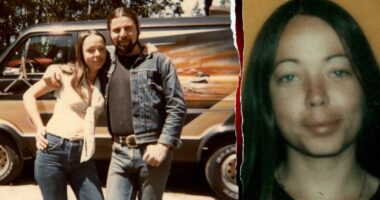

The shooting, which took place in April 2025, left two men dead and several others injured.

The suspect, Phoenix Ikner, is behind bars as the state attorney’s office has said it will seek the death penalty.

Attorneys representing one of the victims in that shooting have already filed a lawsuit against OpenAI. In court filings, they say they have “reason to believe that ChatGPT may have advised the shooter how to commit these heinous crimes.”

Uthmeier first announced an investigation into OpenAI and ChatGPT earlier this month, citing concerns about privacy, national security and potential links to criminal activity.

ChatGPT is also referenced in court documents tied to a separate case involving suspects accused of planting an improvised explosive device at MacDill Air Force Base. Investigators allege the suspect’s sister helped him escape, and court filings state she used ChatGPT to ask whether the vehicle involved could be tracked and to seek information about visas for China, where the suspect ultimately fled.

“We cannot have AI bots that are advising people on how to kill others,” Uthmeier said. “That is wrong, and that is dangerous.”

In a statement provided to 10 Tampa Bay News, OpenAI maintained that “ChatGPT is not responsible” for the deadly FSU shooting:

“Last year’s mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime. After learning of the incident, we identified a ChatGPT account believed to be associated with the suspect and proactively shared this information with law enforcement. We continue to cooperate with authorities. In this case, ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity. ChatGPT is a general-purpose tool used by hundreds of millions of people every day for legitimate purposes. We work continuously to strengthen our safeguards to detect harmful intent, limit misuse, and respond appropriately when safety risks arise.”